Successful AI Adoption Starts Before Deployment: Is Your Organisation AI-Ready?

In the rush to implement AI solutions, it’s easy to focus on the technology and overlook a crucial fact: successful AI adoption begins long before you deploy any system.

Many organisations have leapt into AI projects only to find that excitement fades and ROI remains elusive. In 2025, for example, only about one-third of companies reported meaningful returns beyond initial AI pilots – a shortfall often blamed on “AI FOMO” (fear of missing out) driving hasty, hype-driven initiatives.

The lesson is clear: real, sustainable value from AI comes not from the tool alone, but from organisational readiness – the groundwork across strategy, culture, data, and governance that determines whether AI will flourish or flop.

Align AI with Business Strategy and Value

One of the biggest pitfalls in AI adoption is treating AI as a shiny solution in search of a problem. Effective AI initiatives start with a clear strategy and business alignment. This means pinning down the specific business objectives or pain points that AI will address and articulating how those translate into measurable value. Senior leaders should ask: “Are we pursuing AI to solve a defined business challenge, or just because we feel we should?”

Grounding your AI efforts in business value not only guards against adopting technology for its own sake – it also smooths the path to user adoption and ROI. When people see that a new AI Copilot or assistant is targeted at real inefficiencies or opportunities in their workflow, they are far more likely to use it enthusiastically and effectively. In contrast, vague AI projects launched due to FOMO often result in “inflexible systems built on hype rather than problem-solving,” yielding little long-term impact. By starting with strategic intent and a solid business case, you ensure that AI deployment is driven by purpose. This upfront clarity helps in creating a structured roadmap for AI rollout – a phased plan that prioritises high-value use cases, sets success criteria, and aligns with your organisation’s goals.

Example

In the legal sector, instead of generically saying “let’s use AI in our firm,” a strategic approach might identify contract review as a bottleneck. The firm could then plan an AI Copilot pilot to automatically summarise lengthy contracts and flag key clauses, aiming to cut review time by, say, 30%. By defining this goal and how it ties to client service improvement, leadership builds a compelling case for AI – one that stakeholders can rally behind.

Cultivate a Culture for AI Adoption

Even the best AI solution will falter if people don’t use it. Organisational readiness is as much about people and culture as it is about tech. Senior leaders need to prepare their workforce to embrace AI-driven change. This involves communication, education, and empowerment well before the AI tool goes live.

Start by building excitement and buy-in. Explain the “why” of the AI initiative: how it will make work easier, improve results, or boost innovation. Early involvement of end users – for example, by identifying “AI champions” or a cross-functional team to pilot the tool – can create a sense of ownership. When users feel they have a stake in shaping the solution and its success, their engagement rises.

Training and upskilling are equally critical. Offering practical training sessions, demos, and hands-on workshops demystifies AI and helps employees become comfortable with new tools like Microsoft 365 Copilot. Rather than dropping AI into people’s laps and expecting instant adoption, progressive organisations invest in AI literacy and change management. They might run interactive “day-in-the-life with Copilot” sessions for different roles (e.g. how a marketer can use AI to draft a campaign brief, or how a lawyer can use it for research). This reduces fear and builds confidence and competence among staff.

Culturally, leadership should promote an environment where using AI is encouraged and rewarded. Highlight quick wins and success stories internally – e.g., the finance team that used Copilot to automate parts of budget reporting, saving hours – to show colleagues the tangible benefits. It’s also important to address mindset: reassure employees that AI is a tool to assist, not replace them, and that their judgement remains vital. When people understand that AI will augment their expertise (and not render it obsolete), they are more likely to engage positively. In short, AI adoption is a human endeavour: by tending to culture and change, companies ensure their shiny new AI doesn’t sit on the shelf but is actively driving productivity. In fact, strong user adoption acts as a force multiplier for AI’s impact – the more people using the system effectively, the more value it creates across the organisation.

Get Your Data and Security House in Order

AI readiness also demands a hard look at your data foundations and security posture. AI systems like Copilot thrive on data – they need access to the right information to generate useful insights or automation. So, before deploying AI, organisations should audit and prepare their data environment.

Data maturity means having your data digitised, integrated, and of good quality. Is your content (documents, emails, knowledge bases, etc.) well-organised and accessible to the AI in a meaningful way? Are there silos that need connecting, or outdated records that need cleaning? For example, if a law firm wants AI to help with research, they must ensure their case archives and knowledge repositories are up-to-date and searchable. Part of readiness is also applying metadata or labels to important information – this can help AI locate and respect sensitive data. In regulated industries like legal or finance, knowing where personal or confidential data resides (and who can access it) is fundamental before turning on an AI assistant.

Hand in hand with data quality is data security. Introducing AI should never introduce new risks to client data or company secrets. One key preparation step is to verify that data access controls are tight and appropriate – in other words, check that information isn’t “over-shared.” If your internal file permissions are lax (e.g. sensitive folders accessible to all staff), an AI tool might inadvertently surface something to an unauthorised person.

A readiness exercise might include applying or reviewing sensitivity labels, user access rights, and other compliance measures so that when Copilot comes online, it only shows people what they are meant to see. This is especially pertinent in sectors like law, where client confidentiality is paramount; you must ensure the AI cannot become an unwitting channel for data leakage.

Another security consideration is choosing the right AI platform with a robust trust model. Microsoft 365 Copilot, for instance, runs within your own M365 tenant and abides by the enterprise security, privacy, and compliance controls you already have in place. Crucially, Microsoft has stated that Copilot does not use your organisation’s data to train the underlying AI models. All of this means if you’ve selected Copilot, you have a head start on security: your data stays within your cloud boundary, and the AI’s outputs are informed only by your data, not shared globally.

If you haven’t yet picked an AI solution (or are considering multiple tools), factor security heavily into that decision. An experienced AI partner can help evaluate options for different use cases – weighing questions like: Does the solution keep data onshore? Does it allow you to segregate and protect sensitive info? Can you control what data flows into or out of the AI? It’s wise to favour enterprise-grade AI tools where the security model is transparent and aligns with your policies. In short, being AI-ready means baking in data privacy, security, and compliance from day one, not as an afterthought. That foundation of trust will let your organisation and its people confidently use AI to its fullest potential.

Establish Robust Governance and Trust

Alongside culture and data, governance is a key pillar of AI readiness. This is about setting the rules of the road for AI usage and ensuring ongoing oversight. When deploying a powerful tool like Copilot, senior leaders must ask: What guidelines and guardrails do we need so that AI is used responsibly and effectively here?

A good starting point is to develop or refine an AI usage policy for your organisation. This policy should spell out how employees are allowed to use AI tools, what data they can or cannot input, and for what purposes the AI is approved. For example, a law firm’s policy might permit using an internal Copilot for drafting documents using client data (since it stays inside their secure environment) but forbid using public chatbot services for anything sensitive. The policy can also clarify roles and responsibilities – e.g. requiring human review of AI-generated content before it goes to a client, to ensure quality and accountability.

Beyond policy documents, consider forming an AI governance committee or taskforce. This cross-functional group (IT, security, compliance, HR, and business unit leaders) can oversee the AI rollout and address any issues that arise. They would monitor usage, handle exceptions or ethical dilemmas, and update policies as needed. For instance, if employees start using a new generative AI app outside of sanctioned tools (the classic “shadow AI”), this committee would be alerted and decide how to respond – perhaps by rapidly evaluating that tool’s risks or encouraging use of an approved alternative. Having such governance structures in place reassures senior management (and regulators, where relevant) that AI is being adopted in a controlled, compliant manner.

Risk management is a key part of governance. As highlighted in our focus last month on “Risk, Trust and Security in AI,” organisations must proactively address the risks AI can introduce – from biases and inaccuracies in AI outputs to regulatory and reputational risks. Readiness means you’ve thought through these and have mitigation plans. For example, do you have a process for validating important AI-generated results? Are staff trained to spot and correct AI errors (which can and will happen)? On the compliance side, ensure your AI plans align with data protection laws (like GDPR) and industry-specific regulations. It’s much easier to build in compliance from the start than to retrofit it later. By establishing a governance and ethical framework upfront, you create trust – both internally among users and stakeholders, and externally with clients or customers who might be affected by your AI usage.

From Curiosity to Capability: The Roadmap to Implementation

Finally, being “AI-ready” means charting a practical path from idea to implementation. Rather than an ad-hoc big bang, successful AI adoption is usually iterative. We recommend developing a structured roadmap that breaks the journey into stages – such as an initial assessment and pilot, followed by broader deployment and continuous improvement.

In the early phase, organisations often start with a readiness assessment or pilot program. This could involve rolling out Copilot to a small department or team first, to test the waters and gather feedback. During this phase, you can refine your use cases, address unforeseen challenges, and demonstrate quick wins. The insights from a pilot inform a more robust rollout plan for the wider enterprise. For example, you might discover through a pilot in the HR department that employees benefit greatly from an AI that drafts policy documents, whereas another feature is underused – shaping where to focus training for the company-wide launch.

The roadmap should also incorporate change management milestones (e.g. training schedules, townhall demos, champion network building) and technical checkpoints (e.g. complying with IT change control, scaling infrastructure if needed). By laying this out as a timeline with owners for each task, you turn the abstract idea of “let’s adopt AI” into a concrete project that can be managed and measured.

Crucially, plan for ongoing evaluation and optimisation post-deployment. AI adoption is not a one-time event; user needs and AI capabilities will evolve. Set up metrics to track usage and value (for instance, user adoption rates, time saved, satisfaction scores, costs saved and incremental revenue gained). Monitor these against your business case expectations. If certain departments lag in adoption, allocate support or refresher training there. If new use cases emerge from enthusiastic users, feed those into your next phase of AI expansion. A mature AI-ready organisation treats deployment as the beginning of a continuous improvement loop, where the AI solution is regularly tuned and expanded to deliver maximum value.

Throughout this journey, having a partner with experience in AI deployments can accelerate progress. Expert advisors can provide methodologies and best practices for each stage of the roadmap – from assessing readiness and upskilling your team, to establishing governance and measuring impact. They bring an outside perspective and hard-won lessons to help you avoid common pitfalls. For example, First AI (as an AI solutions partner) often embeds specialists alongside client teams to jump-start capability and ensure knowledge transfer, so that the organisation builds its own lasting AI muscle. The end goal is not just to implement a tool, but to develop an AI-enabled organisation.

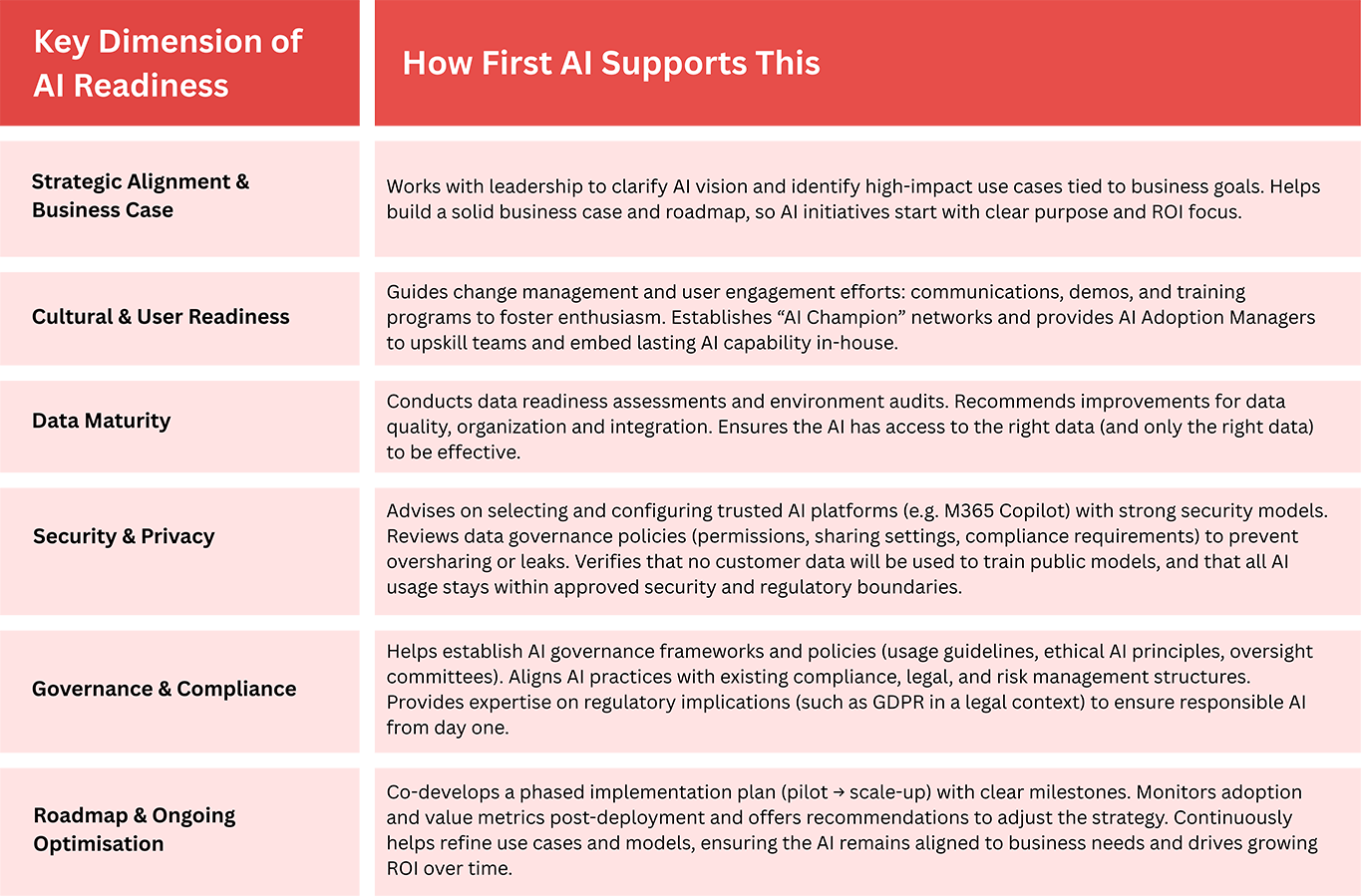

To summarise the discussion above, below is a framework of the key dimensions of AI readiness and how a partner like First AI supports clients in each area:

Conclusion

In essence, readiness for AI is the bedrock of successful AI adoption. For senior leaders eager to harness tools like Copilot, investing in these preparatory steps is not a luxury – it’s a necessity. By taking a strategic, people-centric, and governed approach before the technology lands, you set your organisation up to truly benefit from AI rather than becoming another cautionary tale of unrealised hype. From aligning AI with real business needs, to securing your data and building a receptive culture, each facet of readiness increases the likelihood that your AI initiative will deliver tangible, lasting value.

And remember, you don’t have to navigate this journey alone. Engaging a trusted AI partner such as First AI can accelerate and de-risk your efforts. With expertise in driving organisational change, data strategy, and AI governance, the right partner helps you move “from curiosity to controlled, strategic implementation,” just as envisaged. The organisations that thrive with AI will be those that treated readiness not as a hurdle, but as an integral part of the transformation. AI success begins long before deployment – and with the proper groundwork, it’s success that your organisation can confidently achieve.